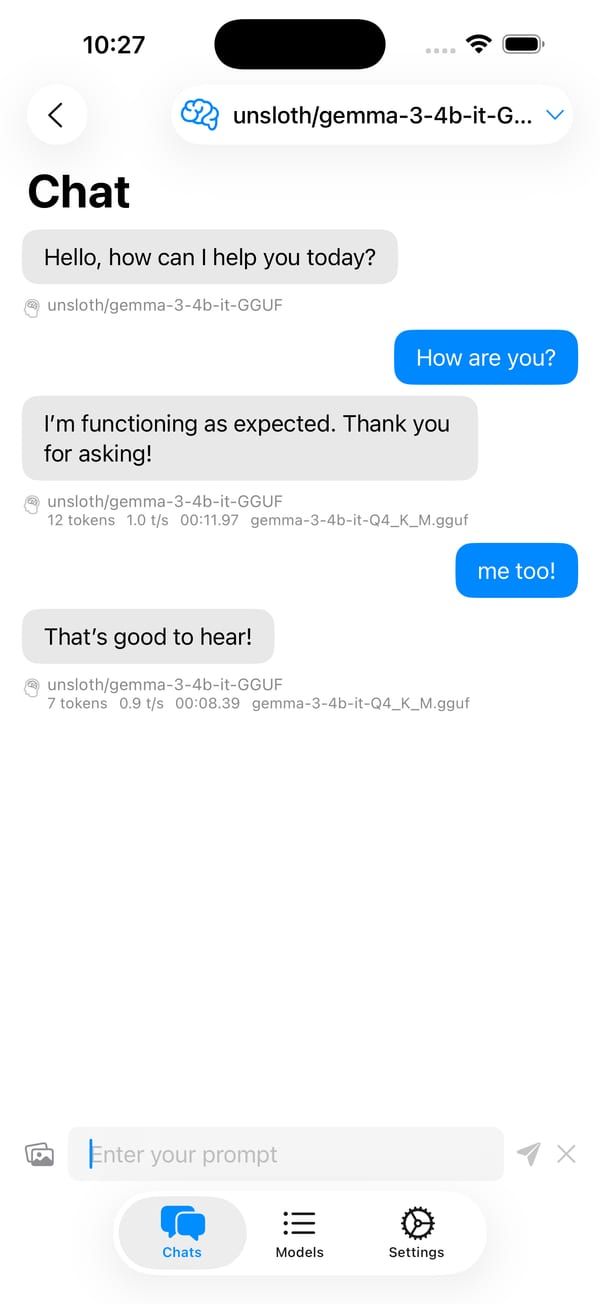

Language models that run on your device.

Download an open-weights model from Hugging Face, chat with it offline. No accounts, no cloud, no subscription. iPhone, iPad, and Mac.

Free. iOS 18.5+ or macOS 15.5+.

A full chat app that runs entirely on-device.

Not a demo, not a toy. Conversations persist, markdown renders, images attach. Everything stays local.

Open model catalog

Browse Hugging Face. Download. Go.

Search the Hugging Face catalog from inside the app. Filter by format, parameter count, and quantization. Downloads resume if interrupted. No gatekept model selection. Pick what works for you.

Real chat

Conversations that stick around.

Multiple chats in the sidebar. Rename, delete, search. Markdown formatting renders. Token counts surface per conversation. Every message persists locally until you delete it.

Vision

Show it a picture.

Vision-capable models can see the images you attach. Take a photo with the camera or pick from your library. Same workflow as a hosted assistant, except the image never leaves your device.

Tunable

Per-model temperature, context, and prompts.

Dial temperature, top-K, top-P, and context window for each model. Set a system prompt and initial greeting. Defaults that work out of the box, settings that don't fight you when you want control.

Apple-native

Built for Apple hardware.

SwiftUI throughout. MLX for Apple Silicon speed. llama.cpp compiled for Metal. Universal app. One download, works on iPhone, iPad, and Mac with the same chats in sync.

Private

Nothing leaves your device.

The only network traffic is model downloads from the hosts you choose. Prompts, responses, images, and chat history stay on your device. No analytics, no telemetry, no account.

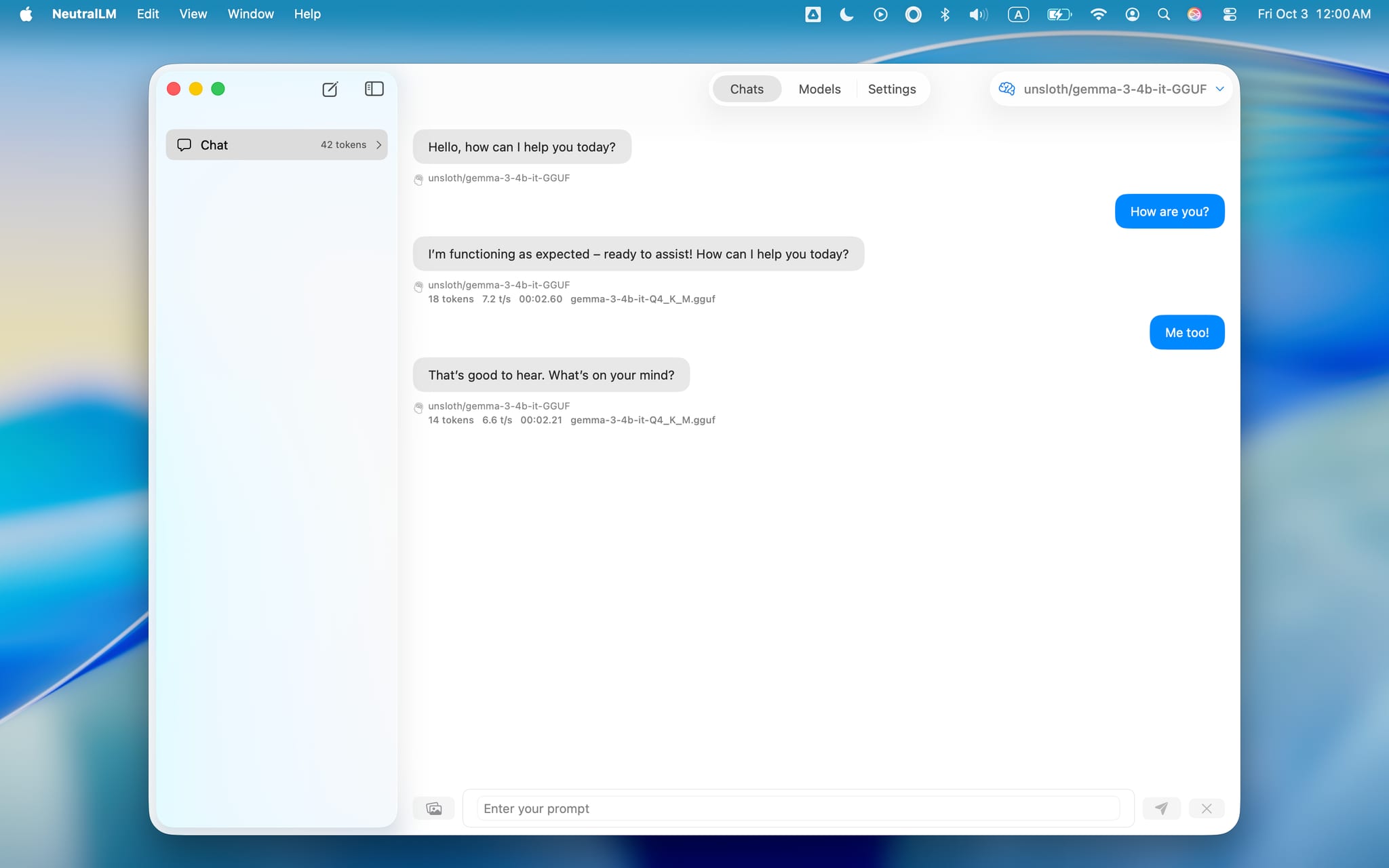

Also on Mac

The full app on one screen.

Sidebar, chat, and model browser. No sheets sliding over, no panels to tap through. Everything visible at once.

Why local matters

The model sees your prompt. Nothing else does.

Hosted assistants log every message you send. NeutralLM doesn't. There's no "us" in the loop. Inference runs on the same device you're typing on. Conversations persist locally in the app. Delete a chat and it's actually gone.

Frequently asked questions

More questions? Ask in the community forum.

Is this really free?

Yes. Fully free. No subscription, no in-app purchases, no ads. The whole app on iPhone, iPad, and Mac.

Does it collect my data?

No. Prompts, responses, conversations, and attached images stay on your device. No analytics, no telemetry, no account required.

Where can I get help or share feedback?

The community forum at community.neutrallm.com. Questions, bug reports, and feature requests all go there.

Does it need an internet connection?

Only to download a model. After that, prompts, responses, and any images you send all run offline on your device.

Does it work with images?

Yes, with vision-capable models. Attach a photo from your library or take one with the camera. The model sees it the same way a hosted chat assistant would.

Which models does it run?

GGUF models via llama.cpp, and MLX models on Apple Silicon via mlx-swift. Browse the Hugging Face catalog inside the app and download directly.

Will a model run on my device?

The model browser shows RAM requirements and a compatibility note per model, with recommendations tuned to your device tier. Start with the suggested model at onboarding.